Understanding AI Document Extraction: A Technical Guide for May 2026

Aman Mishra

Aman Mishra

You upload a contract, run your OCR tool, and watch as tables turn into gibberish and headers merge with body text. AI document extraction software solves this by reading documents the way humans do, understanding spatial relationships and visual hierarchy before extracting fields. We're covering the technical layers that make this work, from schema-driven extraction that beats parse-first approaches to validation strategies that catch errors before they hit your downstream systems.

TLDR:

- AI document extraction converts PDFs and scans into structured data using vision models, OCR, and LLMs working together

- Schema-based extraction outperforms parse-first approaches by preserving layout, tables, and positional context

- Accuracy ranges from 70-85% for handwritten docs to 95-99% for structured forms with proper validation

- Async API patterns with confidence scoring and business rule validation are critical for production reliability

- Unsiloed AI processes 10M+ pages weekly with deterministic extraction, word-level citations, and confidence scores

What Is AI Document Extraction

AI document extraction converts unstructured documents into structured, machine-readable data by combining computer vision, OCR, and vision language models (VLMs). Where traditional OCR scans pixels and outputs raw text, AI extraction understands layout, reading order, and visual hierarchy, treating a document as a structured object instead of a flat string of characters.

The technical pipeline has three layers: a vision model that reads the visual layout of each page, an OCR engine capturing text at word level, and a language model that maps extracted content to a target schema. This architecture handles the full range of real-world document complexity, from multi-column PDFs and scanned forms to tables, charts, and embedded images, where pure text-based OCR consistently breaks down. The result is typed, traceable, schema-aligned data with spatial awareness of where each field originated.

The Evolution From OCR to Vision Language Models

Early document extraction relied on optical character recognition, which converted scanned images into machine-readable text by matching pixel patterns to character templates. OCR worked reasonably well for clean, typed documents but struggled with handwriting, complex layouts, and low-quality scans.

Vision language models changed this by processing document images as complete visual objects. Instead of reading character by character, they interpret spatial relationships, font hierarchies, and contextual meaning together, making extraction far more accurate across messy, real-world documents.

Market Size and Growth Projections

The Document AI market is valued at USD 14.66 billion in 2025, with analysts projecting it to reach USD 27.62 billion by 2030, a CAGR of 13.5%. Three intersecting pressures are driving that growth: digital transformation mandates pushing enterprises to automate manual data entry, tightening regulatory requirements that demand data provenance and auditability for every extracted value, and the sheer volume of unstructured content AI teams need to reliably process at scale. With 80% of enterprise data locked in unstructured formats, document extraction has shifted from a workflow improvement to a core infrastructure requirement.

Core Technical Components of AI Document Extraction

AI document extraction relies on several interlocking technical layers working together. At the base is optical character recognition, which converts raw pixels into machine-readable text. On top of that, document layout analysis identifies structural elements like tables, headers, and columns. Entity extraction then isolates specific data fields such as dates, amounts, and names. In agentic pipelines, an orchestration layer coordinates these steps, routing outputs between models and validating results before writing structured data downstream.

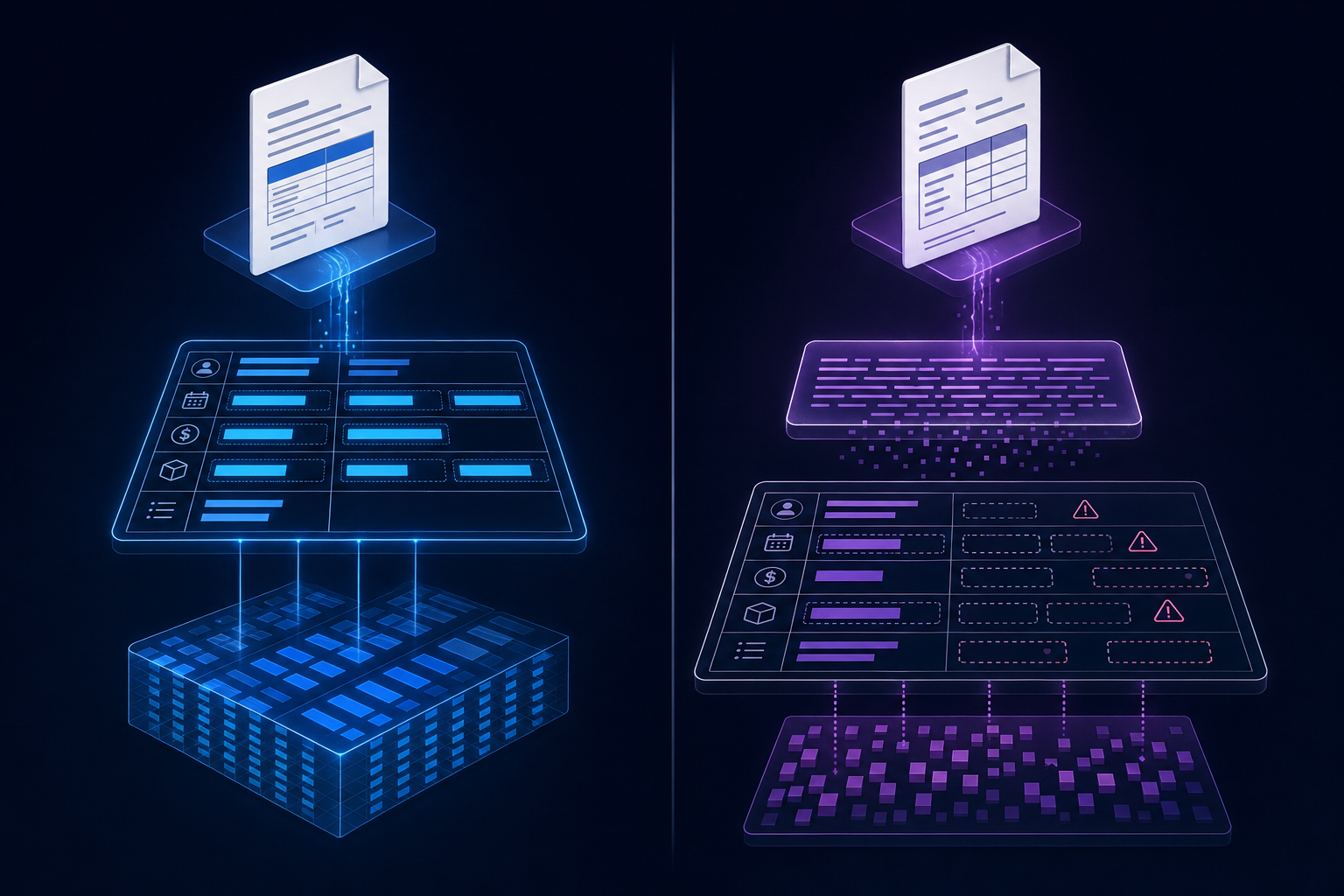

Schema-Based Extraction vs Parse-First Approaches

Schema-based extraction lets you define the output structure upfront, so the model knows exactly what fields to populate before it reads a single character. Parse-first approaches do the opposite: OCR the document, then run extraction over raw text after the fact.

The practical difference shows up in accuracy. When the schema guides extraction directly, the model can resolve ambiguous fields using document context instead of guessing from stripped text. Parse-first pipelines lose layout, table structure, and positional cues early, which compounds errors downstream.

For structured documents like invoices or contracts, schema-driven extraction consistently outperforms parse-first on field recall.

Industry-Specific Use Cases

AI document extraction serves distinct roles across industries. In financial services, it pulls structured data from invoices, contracts, and statements. Healthcare teams process clinical notes and insurance forms. Legal departments extract clauses and obligations from lengthy agreements. Logistics companies parse bills of lading and customs declarations. Government agencies handle high-volume form processing at scale. Each context demands different accuracy thresholds, field types, and validation logic, which is why general-purpose OCR rarely satisfies domain-specific requirements without additional configuration.

Accuracy Requirements and Validation Strategies

Extraction accuracy varies widely depending on document complexity, model choice, and validation design. For structured forms, top systems reach 95 to 99% accuracy. For semi-structured or handwritten documents, that figure can drop to 70 to 85%. Building a reliable pipeline means accounting for both ends of that range.

A few approaches consistently improve output quality:

- Run confidence score thresholds on every extracted field, routing low-confidence results to human review queues instead of passing them downstream unchecked.

- Cross-validate extracted values against known business rules, such as date range constraints or field-level format patterns, to catch plausible but incorrect outputs.

- Log extraction failures by document type so you can identify which templates or layouts degrade accuracy most, then retrain or fine-tune targeted to those gaps.

Document Extraction Approaches Compared

Feature | Unsiloed AI | Google Document AI | Open Source Tools |

|---|---|---|---|

Extraction Method | Schema-based with vision language models, layout-aware parsing, and confidence scoring per field | Pre-trained models with template-based processors for common document types | Parse-first OCR with custom post-processing pipelines requiring manual configuration |

Accuracy Range | 95-99% for structured forms, 85-95% for semi-structured documents with validation layers | 90-95% for supported document types, lower for custom formats without training | 70-85% baseline, highly dependent on implementation quality and tuning effort |

Deployment Options | Cloud API, on-premises, and hybrid configurations with identical feature parity | Cloud-only managed service through Google Cloud | Full control over deployment environment but requires infrastructure management and scaling expertise |

Integration Complexity | REST API with async job patterns, presigned URL support, and JSON schema definitions | REST API requiring GCP account setup, IAM configuration, and quota management | Self-hosted services requiring custom API layer, queue management, and model orchestration |

Cost Structure | Per-page pricing with volume discounts, no infrastructure overhead | Per-page pricing that scales expensively with volume, plus GCP infrastructure costs | Low marginal cost per page but high engineering investment for development and maintenance |

Table Handling | Preserves multi-page table structure with row-column relationships and spatial context | Handles simple tables well, struggles with complex nested or multi-page table structures | Tables frequently collapse into plain text requiring extensive custom parsing logic |

API Design Patterns for Document Extraction

Document extraction APIs are almost always asynchronous. Processing a dense, multi-page PDF takes seconds to minutes, so submitting a job and polling for results is far more reliable than waiting on a synchronous response that will time out in production.

The standard pattern: POST your document to get a job_id, then GET that job's status endpoint until it returns Succeeded or Failed. For documents already in cloud storage, pass a presigned URL instead of uploading the file directly, which removes unnecessary data transfer and fits naturally into S3, GCS, or Azure Blob workflows.

For error handling, treat every non-success status as a routing decision. A Failed status needs different logic than a timeout or a schema validation error. Build explicit handlers for each case, log the raw error response, and retry transient failures with backoff before surfacing them immediately.

Integrating Document Extraction Into RAG Pipelines

Extracted text from PDFs rarely maps cleanly into a retrieval-augmented generation (RAG) system. Headers get merged with body text, table values lose their row context, and multi-column layouts collapse into a single stream. Before any vector embedding happens, the document structure needs to be preserved.

Good extraction pipelines chunk content by semantic unit instead of character count, keeping table rows intact, maintaining heading hierarchy, and separating figures from prose. This structural fidelity directly affects retrieval precision and, downstream, the quality of LLM responses grounded in those chunks.

Deployment Options: Cloud, On-Premises, and Hybrid

Cloud deployments work for most teams: low setup overhead, REST API access, and no infrastructure to manage. On-premises or air-gapped setups serve compliance-driven industries where regulatory mandates or data residency rules prevent sending documents to external endpoints. Hybrid splits workloads by sensitivity, keeping the most restricted documents local while routing standard processing to the cloud.

How Unsiloed AI Delivers Production-Ready Document Extraction

Unsiloed AI is designed for teams that need reliable document extraction in production environments. The system goes beyond basic OCR by combining layout-aware parsing, LLM-based field extraction, and confidence scoring into a single API. Whether you're processing invoices, contracts, or medical records, the extraction pipeline handles complex layouts, multi-page documents, and mixed content types without requiring extensive prompt engineering or custom model training on your end.

Final Thoughts on Choosing Document Extraction Technology

The gap between basic OCR and modern AI document processing shows up fastest when you feed the system messy, real-world files. Schema-based extraction handles complex layouts, preserves table structure, and gives you confidence scores on every field, which matters when you're building pipelines that need to scale beyond a few hundred documents a month. Think through your accuracy requirements before committing to a solution, because retraining or switching providers mid-project is expensive. Book a demo to test extraction against your actual document set. Your extraction pipeline should make downstream systems more reliable, not create new data quality problems to solve.

FAQ

What's the main difference between OCR and AI document extraction?

OCR converts pixels into text character by character, while AI document extraction understands layout, reading order, and visual hierarchy to produce structured, schema-aligned data. AI extraction preserves spatial relationships and context that pure OCR loses, making it far more accurate for complex documents like multi-column PDFs, tables, and forms.

Can I use AI document extraction without building custom models?

Yes. Modern extraction APIs handle complex layouts, multi-page documents, and mixed content types through REST endpoints without requiring model training or extensive prompt engineering on your end. You define the output schema, submit documents via API, and receive structured JSON with confidence scores and field-level citations.

How do you validate extraction accuracy in production?

Run confidence score thresholds on every extracted field and route low-confidence results to human review queues instead of passing them downstream. Cross-validate outputs against business rules like date ranges or format patterns, and log failures by document type so you can identify which templates degrade accuracy most for targeted improvement.

Google Document AI vs open source extraction tools?

Google Document AI offers managed infrastructure and pre-trained models but can become expensive at scale and locks you into their ecosystem. Open source tools give you full control and lower costs but require substantial engineering effort to handle edge cases, maintain accuracy, and scale reliably. For production systems processing millions of pages, purpose-built extraction APIs often deliver better accuracy with less setup overhead than either approach.

When should you use schema-based extraction instead of parse-first approaches?

Use schema-based extraction when you need high accuracy on structured documents like invoices, contracts, or forms. The schema guides extraction directly using document context and layout, while parse-first approaches lose table structure and positional cues early, which compounds errors downstream. If field recall matters more than flexibility, schema-driven extraction consistently outperforms.