Chunking Strategy for RAG Systems: A Technical Implementation Guide (May 2026)

Aman Mishra

Aman Mishra

You built a RAG system and the retrieval quality is all over the place. Some queries return perfect context, others pull in irrelevant paragraphs or cut off right before the answer. The problem isn't your vector database or your prompt engineering. It's how you're splitting source documents before indexing them, and the wrong chunking strategy for RAG will tank your results no matter how much you optimize downstream. We'll break down fixed-size, recursive, semantic, and agentic chunking, explain when each one makes sense, and show you how to tune chunk size and overlap based on what your users actually ask for.

TLDR:

- Match chunk size to your query type: 512-1024 tokens for analytical queries requiring multi-concept reasoning, 128-256 tokens for factoid lookups like dates or names

- Start with recursive chunking at 512 tokens with 15-20% overlap as your baseline before testing semantic or agentic methods

- Measure both retrieval hit rate and answer accuracy on your actual documents and queries, not theoretical benchmarks

- Parse PDFs with layout-aware tools before chunking to preserve tables, headers, and reading order across complex formats

- Apply 10-20% overlap relative to chunk size to prevent context loss at boundaries without bloating your index

Understanding Chunking Strategy for RAG Systems

Chunking is the process of splitting source documents into smaller, self-contained text segments before they get indexed in a vector store. In a retrieval-augmented generation (RAG) system, a user query triggers the retrieval layer to find the most semantically similar chunks and pass them as context to an LLM. What the LLM generates depends directly on what those chunks contain.

Get the segmentation wrong and the retrieval layer returns incomplete fragments or bloated passages that bury the relevant signal. Get it right and even dense, multi-page documents become reliably queryable.

Why Chunking Strategy Determines RAG Performance

Retrieval quality in RAG systems is determined before any query is ever processed. The way you split source documents sets a hard ceiling on what your retriever can return and what your LLM can reason over.

Chunks that are too large dilute relevant signals with unrelated content. Chunks that are too small lose the surrounding context needed to answer questions accurately. Neither failure mode shows up cleanly in isolation; both degrade answer quality in ways that are easy to misattribute to the model itself.

Getting chunking right is the foundational step every RAG pipeline depends on.

Fixed-Size Chunking: The Baseline Approach

Fixed-size chunking splits text at a predetermined token or character count, regardless of content. No semantic signals, just a fixed window sliding across the document.

It suits homogeneous text and fast prototyping. When your content lacks meaningful structure, the crude split often works well enough to get a pipeline running quickly.

The core problem is boundary blindness. A split can land mid-sentence, mid-table, or mid-argument, leaving chunks without necessary context. Overlap mitigates this by repeating a portion of tokens between adjacent chunks. A 10-20% overlap relative to chunk size is a reasonable starting point, though the right value depends on content density and query patterns.

Use fixed-size chunking to create a baseline. It gives you a clear reference point to measure how much a smarter strategy actually improves retrieval quality.

Recursive Chunking: Respecting Document Structure

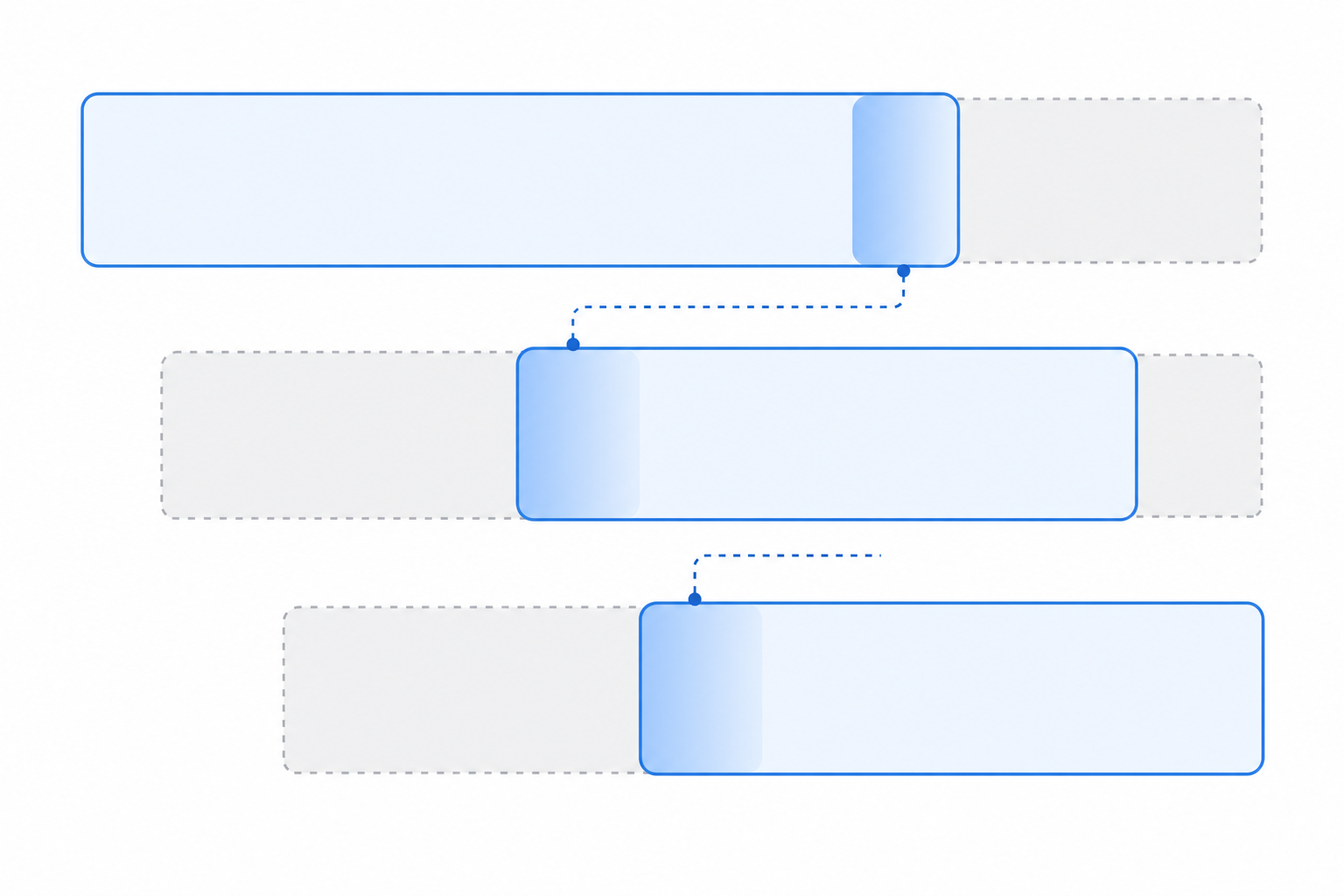

Recursive chunking applies a ranked separator hierarchy in sequence: paragraph breaks first, then single newlines, then sentence boundaries, then words. When a segment still exceeds the target size after one separator, the algorithm recurses to the next level.

This keeps chunks aligned with natural document boundaries. A paragraph stays intact until it's too long, and only then does it split at sentence breaks, producing fewer truncated arguments and better context per chunk than a fixed window.

LangChain's RecursiveCharacterTextSplitter documentation details the standard implementation, which performs well across prose, documentation, and lightly structured text without requiring semantic models or extra infrastructure. For RAG systems, it's the right default to benchmark against before reaching for more advanced methods.

Semantic Chunking: Meaning-Driven Segmentation

Semantic chunking groups text by embedding similarity rather than character count: each sentence is embedded, cosine similarity is computed between consecutive sentences, and a new chunk begins wherever that similarity drops below a set threshold. This keeps topically related sentences together even when they span different lengths.

The similarity threshold is the key tuning parameter. A high threshold (e.g., cosine similarity > 0.85) keeps chunks tightly focused on a single topic but produces many small segments. A low threshold (e.g., > 0.65) groups more loosely related sentences together, creating larger chunks that preserve broader argument flow. Most implementations start around 0.75 and adjust based on observed boundary quality.

In practice, you embed each sentence using a model like text-embedding-3-small or a local sentence-transformers model, compute pairwise cosine similarity between adjacent sentences, and split wherever similarity falls below the threshold. LangChain's SemanticChunker implements this pattern directly and supports percentile, standard deviation, and interquartile range methods for threshold selection.

This approach aligns chunk boundaries with topic transitions, so retrieved passages carry coherent context instead of fragments of interrupted thoughts. The tradeoff is cost: generating embeddings for every sentence before chunking adds latency and compute overhead that fixed-size methods avoid. For most production workloads, semantic chunking makes sense when your documents cover multiple distinct topics per page and retrieval precision matters more than indexing speed.

Agentic Chunking: AI-Driven Document Segmentation

Agentic chunking takes a fundamentally different approach by using an LLM to make segmentation decisions. Instead of applying fixed rules or statistical thresholds, an agent reads the document and decides where boundaries belong based on content meaning.

This approach handles complex documents where structure is implicit, such as legal contracts, research papers, or multi-topic reports. The LLM can reason about context across paragraphs and group content the way a human editor would.

The tradeoff is cost and latency. Every chunking decision requires an LLM call, making agentic chunking slower and more expensive than rule-based or semantic methods.

Optimizing Chunk Size and Overlap Parameters

Chunk size selection depends on what your queries ask for. Factoid queries (narrow lookups for a specific date, name, or value) retrieve better from smaller chunks (128-256 tokens) because the answer is isolated without surrounding noise. Analytical queries requiring reasoning across multiple concepts need larger windows (512-1024 tokens) to preserve the broader argument.

NVIDIA's research on chunking strategies shows how query type directly influences optimal chunk parameters.

Query Type | Recommended Size | Overlap |

|---|---|---|

Factoid / narrow lookup | 128-256 tokens | 10-15% |

Mixed workload baseline | 512 tokens | 15-20% |

Analytical / multi-concept | 768-1024 tokens | 20% |

Overlap should scale with chunk size. For 512-token chunks, 50-100 tokens covers most sentence boundaries without dropping critical context. Beyond 20%, you add index bloat without meaningful recall improvement. Start with 512 tokens and 15% overlap, then adjust based on retrieval quality across your actual query distribution.

Document Structure-Aware Chunking Methods

Document structure-aware chunking respects the inherent organization of source files instead of splitting text arbitrarily. For PDFs and HTML documents, that includes preserving headers, paragraphs, tables, and lists as natural chunk boundaries.

Tools like partition_pdf from the Unstructured library parse layout elements directly, letting you chunk by section heading or content type. This keeps tables intact and prevents a heading from being separated from its associated body text.

For code files, splitting at function or class boundaries preserves logical units. Markdown documents split cleanly at heading levels, maintaining the author's intended hierarchy throughout retrieval.

Measuring Chunking Strategy Performance

No test beats your own documents and queries. Theoretical benchmarks are a starting point at best; what matters is how a chunking strategy performs on your actual content and query distribution.

Retrieval metrics like precision@k tell you whether relevant chunks surface, but not whether the LLM generates correct answers from them. Score both retrieval hit rate and answer accuracy on a representative query set. That pairing is the only reliable signal for what works on your specific data.

Layout-Aware Parsing Before Chunking

Before any chunking strategy takes effect, the underlying document structure must be accurately parsed. PDFs, in particular, present real challenges: multi-column layouts, embedded tables, headers, and footnotes can all fragment text in ways that corrupt chunk boundaries.

Layout-aware parsers like partition_pdf from the Unstructured library detect these structural elements before chunking begins, preserving logical reading order across complex document formats.

Final Thoughts on Building Reliable RAG Chunking

No single chunking strategy for RAG works across every document type or query pattern. Recursive chunking gives you a strong baseline, but what matters is testing with your content and measuring both retrieval hit rate and answer accuracy. Layout-aware parsing before chunking keeps tables and sections intact, which matters more than most teams realize. If you need a RAG system that handles complex documents at scale, book a demo to talk through your specific requirements.

FAQ

What's the best chunking strategy for RAG systems?

Start with recursive chunking using LangChain's RecursiveCharacterTextSplitter at 512 tokens with 15-20% overlap. It respects document structure, works across most content types, and provides a solid baseline to measure against before investing in semantic or agentic methods.

Semantic chunking vs agentic chunking: which should I use?

Semantic chunking groups text by meaning using embedding similarity and works well for topic-driven documents with moderate compute overhead. Agentic chunking uses an LLM to make segmentation decisions, handling complex implicit structures but costing more per document. Choose semantic for production workloads and agentic when document complexity warrants the latency and expense.

How does chunking help working memory in RAG systems?

Chunking breaks large documents into retrieval-sized segments that fit within LLM context windows, allowing the model to focus on relevant passages instead of processing entire documents. Smaller, well-bounded chunks reduce noise in retrieved context and improve answer accuracy by isolating the signal your query actually needs.

Can I use fixed-size chunking for production RAG?

Yes, but only as a baseline. Fixed-size chunking with 10-20% overlap works for homogeneous text and fast prototyping, but it splits mid-sentence or mid-table without respecting content boundaries. Use it to set a performance floor, then switch to recursive or semantic methods for better retrieval quality.

When should I adjust chunk size and overlap parameters?

Adjust when your query distribution changes. Factoid queries (dates, names, specific values) perform better with 128-256 token chunks, while analytical queries requiring multi-concept reasoning need 512-1024 tokens. Test on your actual documents and queries, measuring both retrieval hit rate and answer accuracy before committing to new parameters.